Many websites, apps, and online platforms allow users to upload user-generated content (UGC). A Terms and Conditions agreement can help explain the rules and users' responsibilities concerning user-generated content.

This article explains what a Terms and Conditions agreement is, what user-generated content is, and how to address user-generated content in your Terms and Conditions agreement.

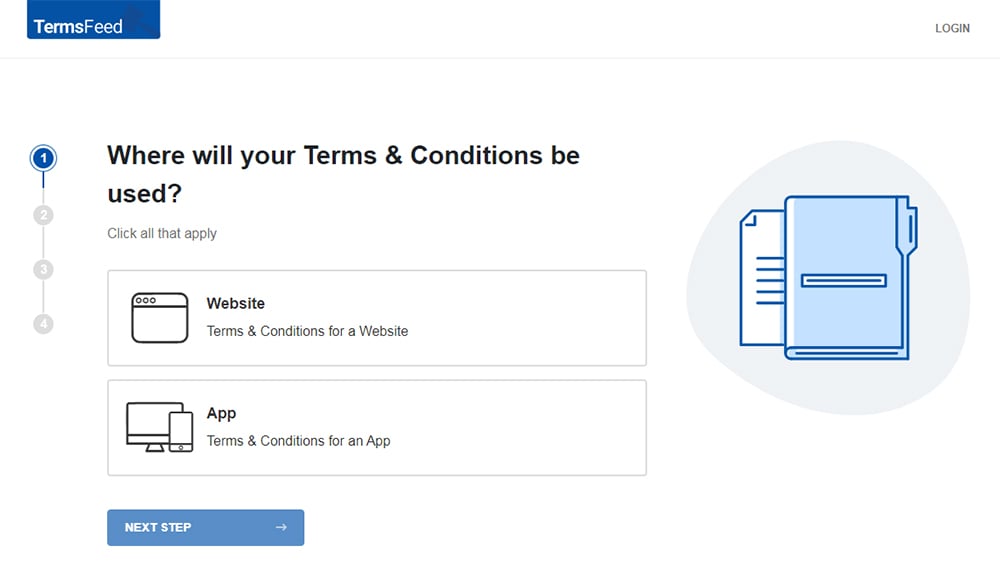

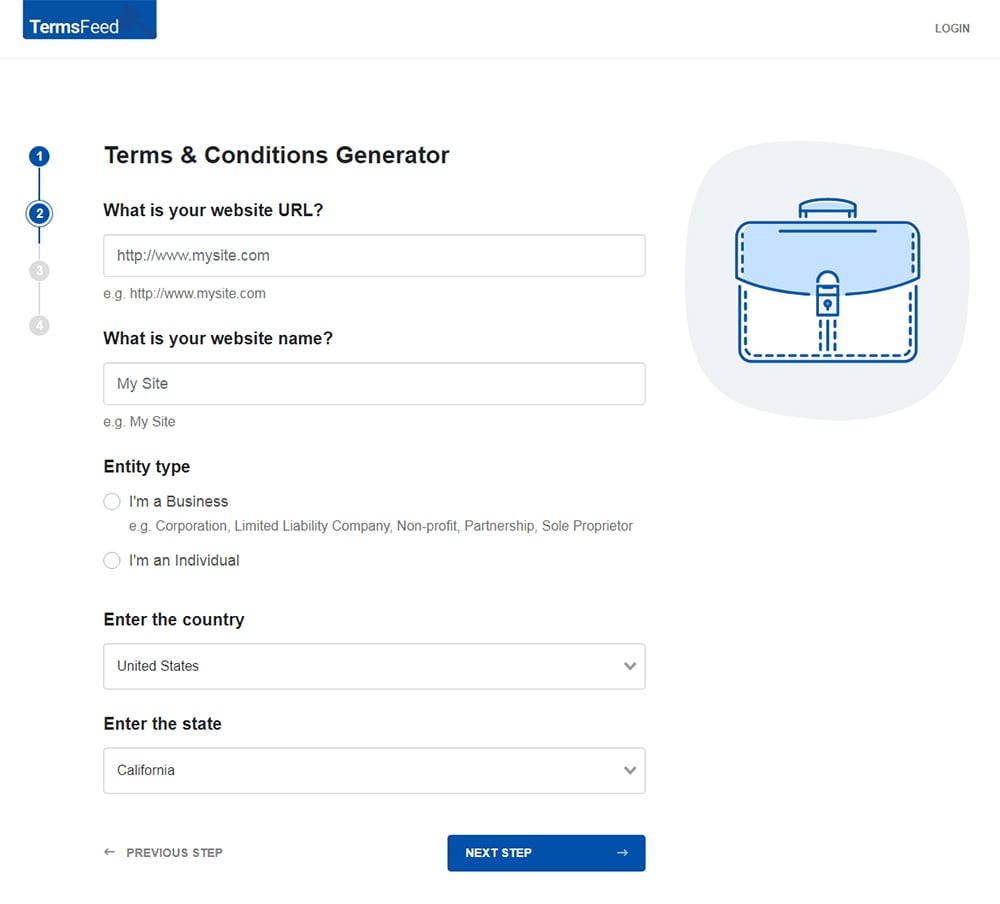

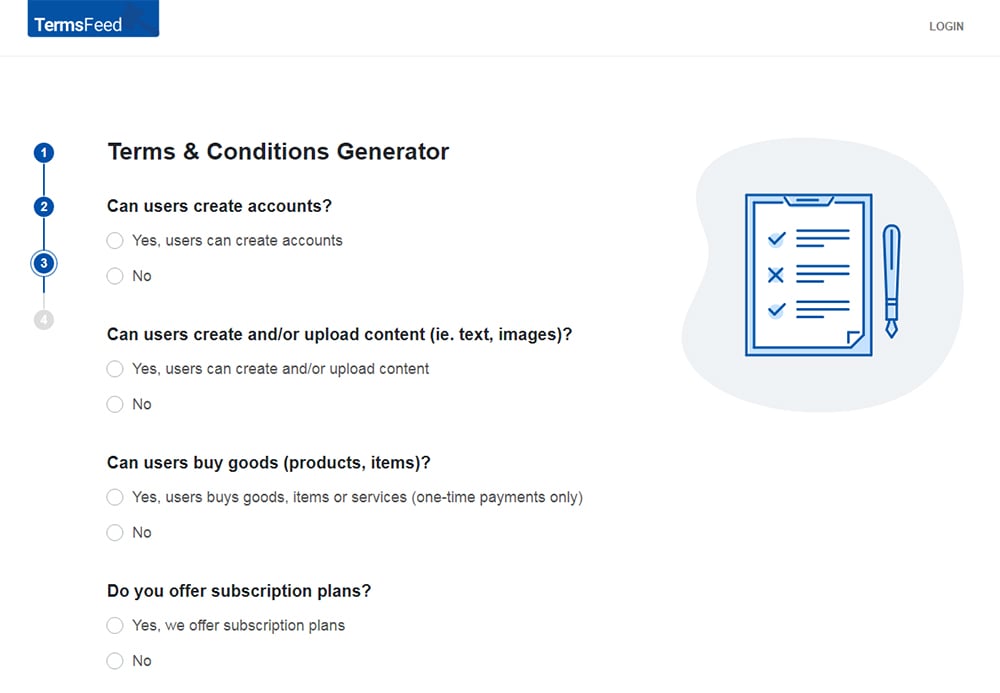

Our Terms and Conditions Generator makes it easy to create a Terms and Conditions agreement for your business. Just follow these steps:

-

At Step 1, select the Website option or the App option or both.

-

Answer some questions about your website or app.

-

Answer some questions about your business.

-

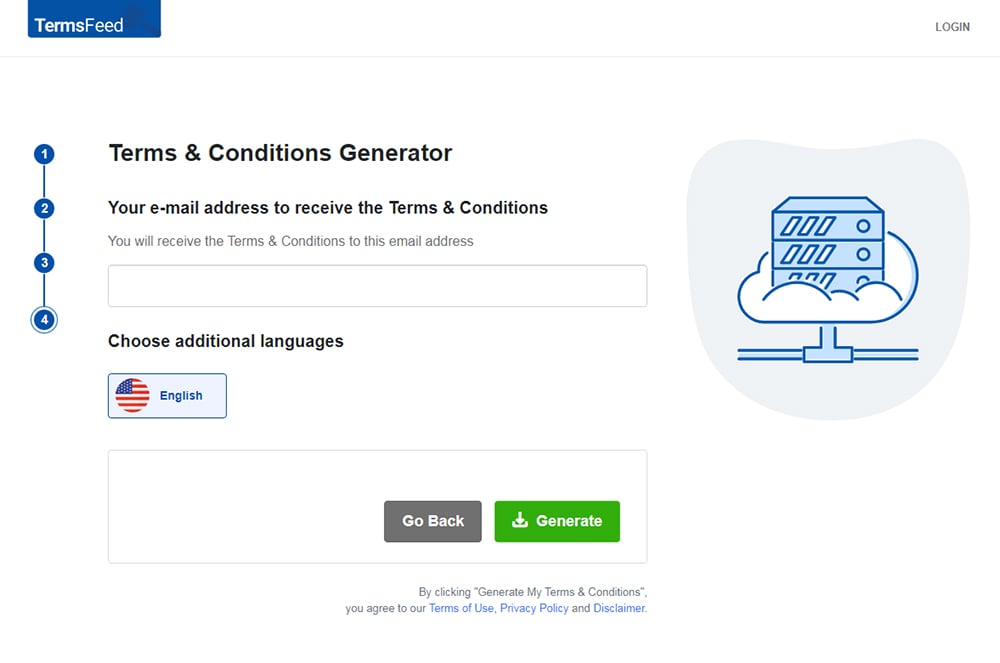

Enter the email address where you'd like the T&C delivered and click "Generate."

You'll be able to instantly access and download the Terms & Conditions agreement.

- 1. What is a Terms and Conditions Agreement?

- 2. Is a Terms and Conditions Agreement Legally Required for User-Generated Content?

- 3. What is User-Generated Content?

- 4. How Do You Address User-Generated Content in a Terms and Conditions Agreement?

- 4.1. Who Owns the User-Generated Content

- 4.2. What Types of User-Generated Content Cannot be Uploaded

- 4.3. How User-Generated Content Can Be Used

- 4.4. How to Remove User-Generated Content

- 5. Where Do You Display a Terms and Conditions Agreement for User-Generated Content?

- 6. How Do You Get Users to Agree to a Terms and Conditions Agreement for User-Generated Content?

- 7. Summary

What is a Terms and Conditions Agreement?

A Terms and Conditions agreement is a legal document that describes the rules and responsibilities users must agree to in order to use your products, services, websites, or apps. This type of agreement is also called Terms of Use, Terms of Service, or just Terms.

Your Terms and Conditions agreement should be tailored to your unique business.

Depending on the scope of services provided, Terms and Conditions agreements often contain the following clauses:

- Acceptance of services

- Acceptable uses of services

- Restricted uses of services

- User-generated content

- Disclaimers of warranty/Limitation of liability

- Privacy information (with a link to the business's Privacy Policy)

- Payment and subscription billing information

- Third party information

- Grounds for termination

- Governing law

- Contact information

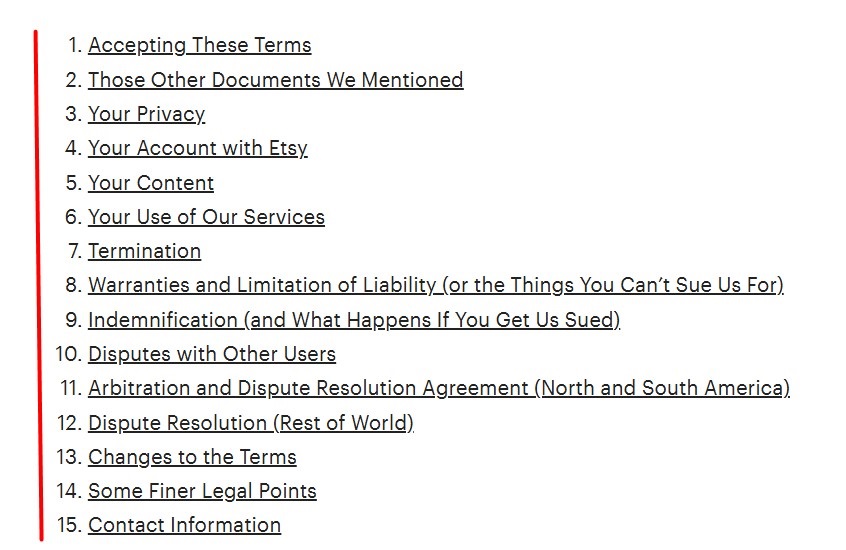

The table of contents for Etsy's Terms of Use agreement includes clauses about acceptance of its terms, users' privacy, user-generated content, termination, and warranties and limitation of liability, among others:

Is a Terms and Conditions Agreement Legally Required for User-Generated Content?

While not legally required, a Terms and Conditions agreement can help users understand their obligations, give you more control over your platform, and provide legal protection.

Without one, you risk not being able to control your platform or defend yourself against legal claims as well as you would if you had one in place. Users may not understand what rights they and you have when it comes to user-generated content, which could lead to potential lawsuits, unhappy users and other issues.

What is User-Generated Content?

User-generated content is content such as text, images, videos, or audio recordings that users create and upload to a website, app, or online platform. Many businesses allow and encourage user-generated content as it can contribute an element of authenticity to their brand and function as free advertising.

Types of user-generated content can include the following:

- Social media posts, stories, tweets, and videos

- User testimonials or reviews

- Comments on blogs, articles, and social media posts

- Video uploads

- Podcasts, live streams, and music

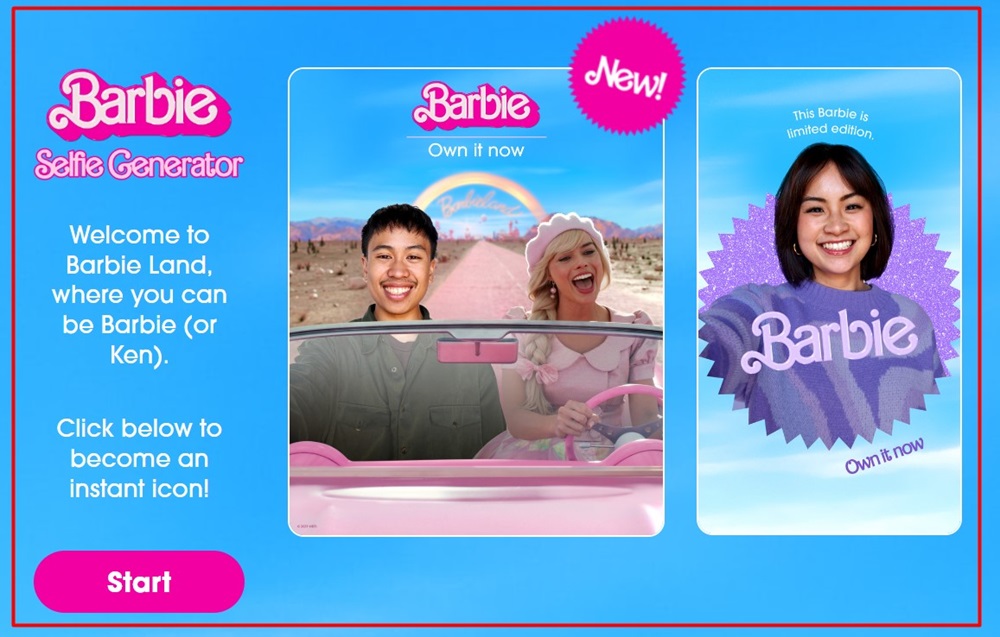

For example, Warner Bros. Entertainment's Barbie Selfie generator allows users to upload photos of themselves to create user-generated content:

How Do You Address User-Generated Content in a Terms and Conditions Agreement?

User-generated content can be challenging to control, especially if you have a lot of users. You may not have the time or manpower to screen content as it is uploaded, which opens the door for potentially abusive or otherwise inappropriate content.

By including clauses that cover user-generated content in your Terms and Conditions agreement, you can:

- Help ensure that users understand the guidelines pertaining to user-generated content

- Let users know that you have the right to remove their content or terminate their use of your services should they violate your terms

- Comply with third-party service providers that may require you to remove objectionable content

Let's look at some of the clauses regarding user-generated content you can include in your Terms and Conditions agreement.

Who Owns the User-Generated Content

This section explains who owns user-generated content once it has been uploaded. In many cases, users retain ownership of their content but automatically grant the platform a license to use their content as they see fit once it's uploaded.

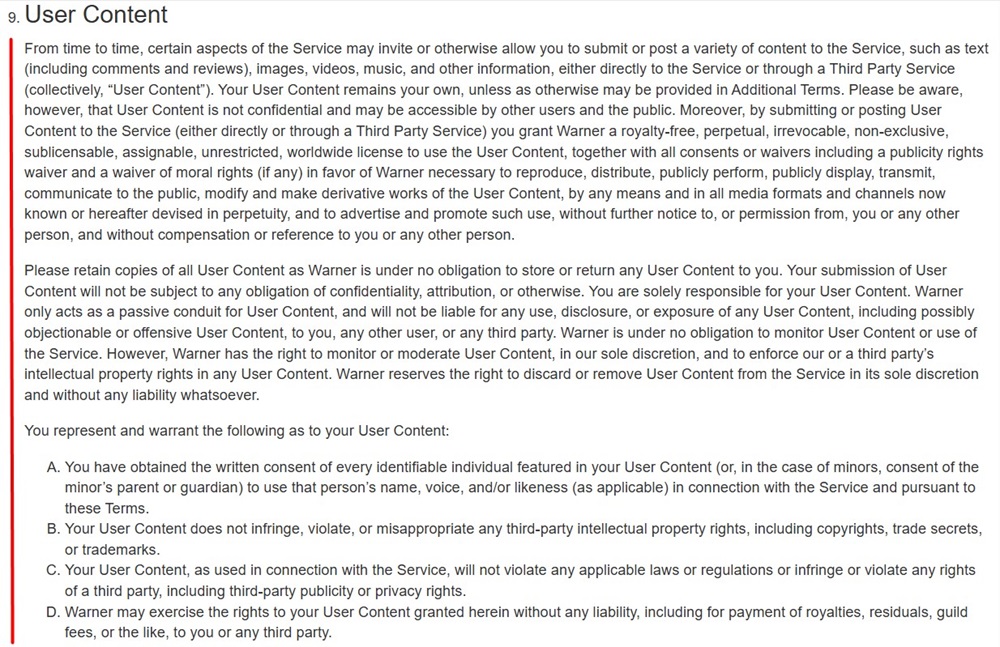

Warner Bros. Entertainment's Terms of Use explains that users retain ownership of their content unless otherwise specified in its Additional Terms. However, it also states that by submitting or posting user-generated content, users are signifying that they are agreeing to give it a license to use their content for public communication and advertisement purposes, among others:

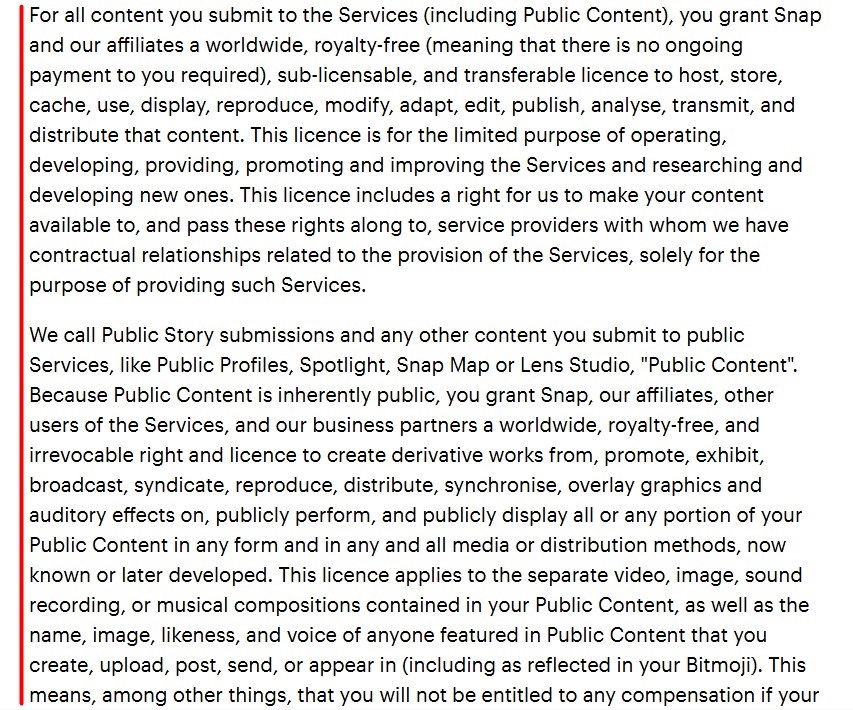

Similarly, Snap's Terms of Service lets users know that when they submit user-generated content (referred to by the company as "Public Content") they are granting it the right to reproduce, modify, distribute, and otherwise use the content for operating, promotional, research, and developmental purposes:

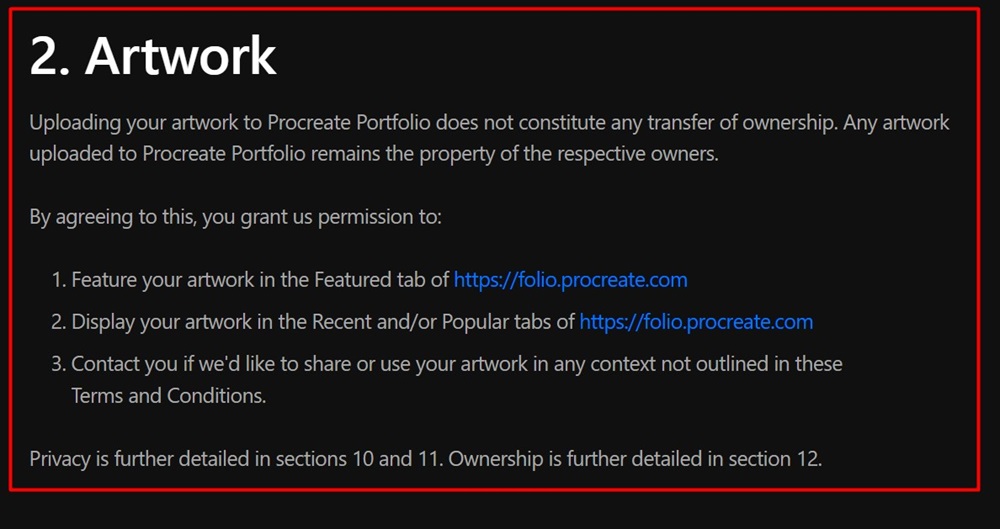

Procreate's Terms and Conditions agreement explains that users maintain ownership over their user-generated content, but that it has the right to feature and display users' artwork and contact users if they want to use the content for any other purposes:

What Types of User-Generated Content Cannot be Uploaded

This clause lists restricted content, such as content that is abusive or contains adult or copyrighted materials.

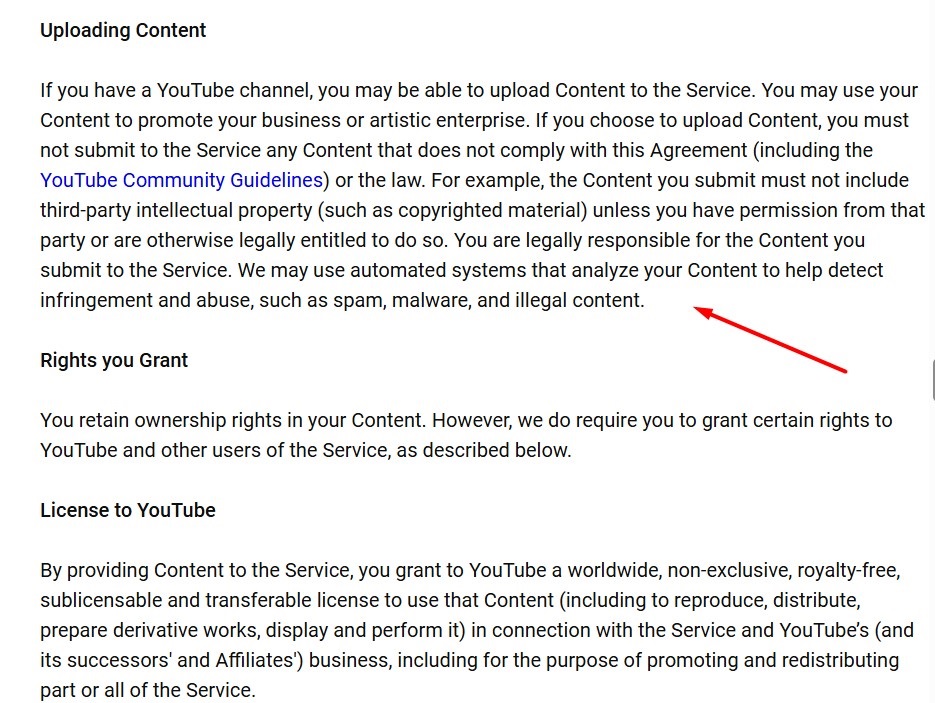

YouTube's Terms of Service explains that users cannot upload user-generated content that doesn't comply with the agreement or the law, or content that includes unauthorized copyrighted material, spam, or malware:

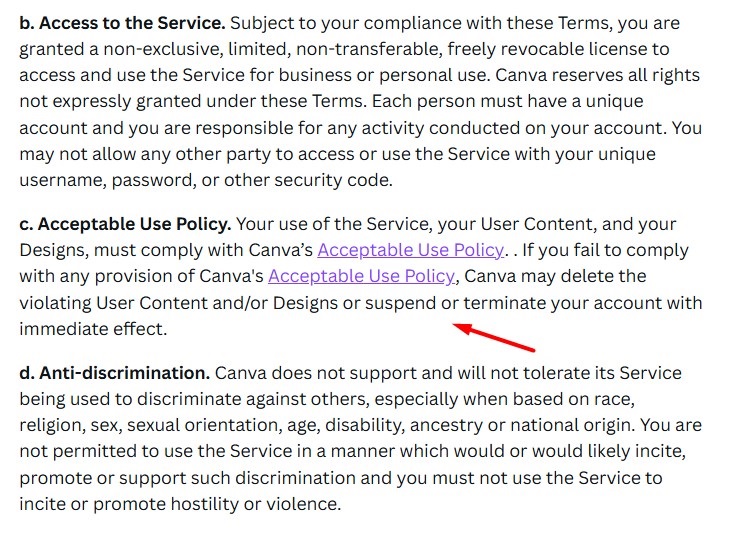

Canva's Terms of Use explains that it may delete user-generated content or terminate or suspend a user's account if their user-generated content doesn't comply with its linked Acceptable Use Policy:

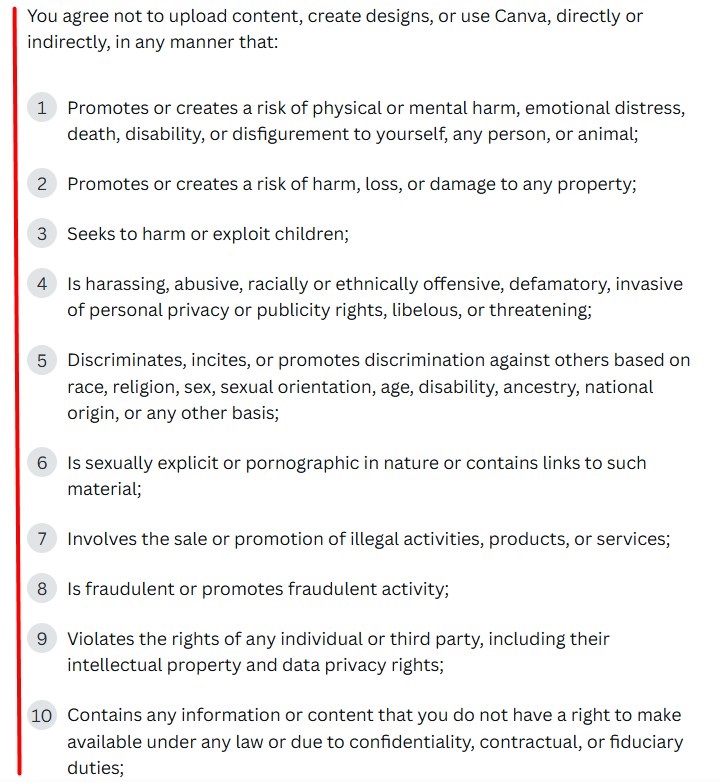

Users can find a list of restricted uses for user-generated content when they click on the link to Canva's Acceptable Use Policy. Prohibited content includes that which promotes a risk of harm or violates another person's rights, or content that is harassing, offensive, discriminatory, or sexually explicit:

How User-Generated Content Can Be Used

This part of your Terms and Conditions agreement explains authorized uses for user-generated content, such as sharing it with other users or uploading it as a comment or review.

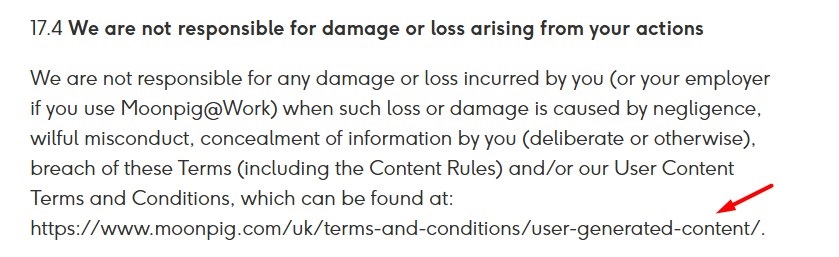

Moonpig's Terms and Conditions agreement includes the web address for its User Content Terms and Conditions agreement, where users can find information about acceptable uses of user-generated content:

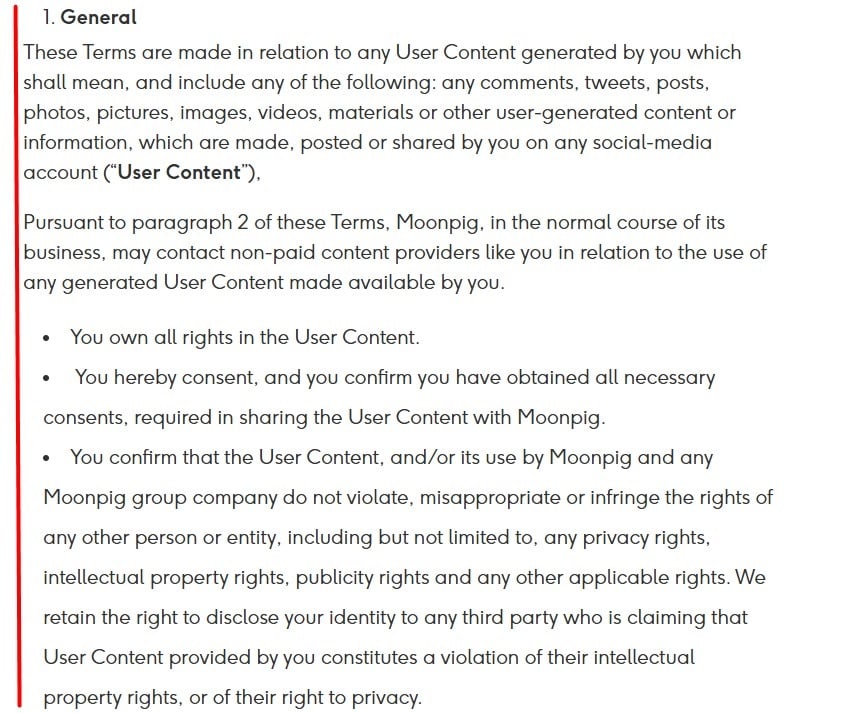

Moonpig's User Generated Content Terms and Conditions agreement lets users know the types of user-generated content the agreement applies to, including comments, tweets, posts, images, and videos that are shared on social media accounts. It allows these types of user-generated content as long as they don't infringe on other people's rights:

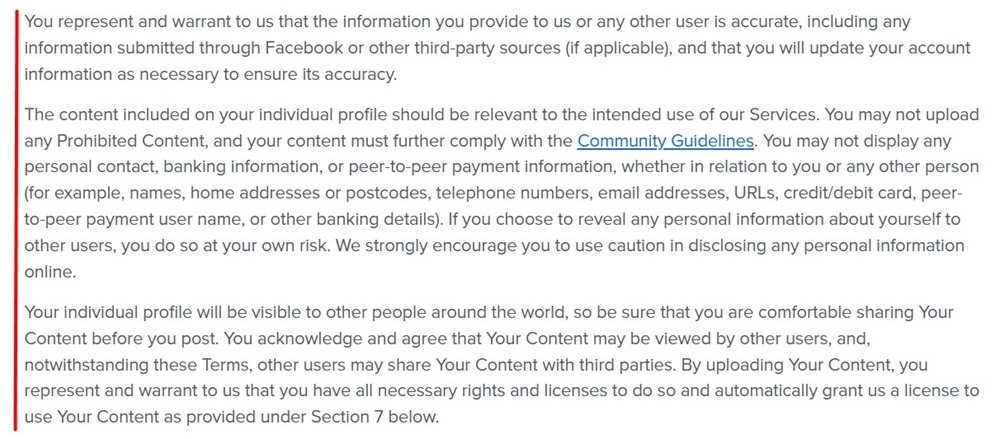

Tinder's Terms of Use explains that users can share content as long as it is accurate, relevant to their use of its services, and compliant with its Community Guidelines document:

How to Remove User-Generated Content

This clause lets users know how they can remove their content once it's uploaded-and circumstances in which you may remove their content.

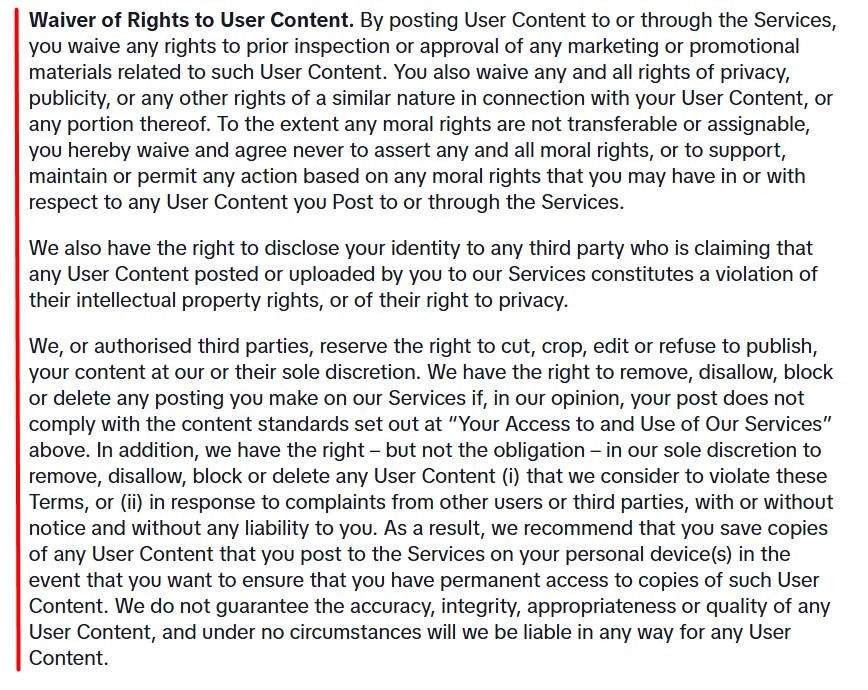

TikTok's Terms of Service explains that it and authorized third parties have the right to edit or refuse to publish user-generated content and that it may remove content and block posts from users who violate its content standards:

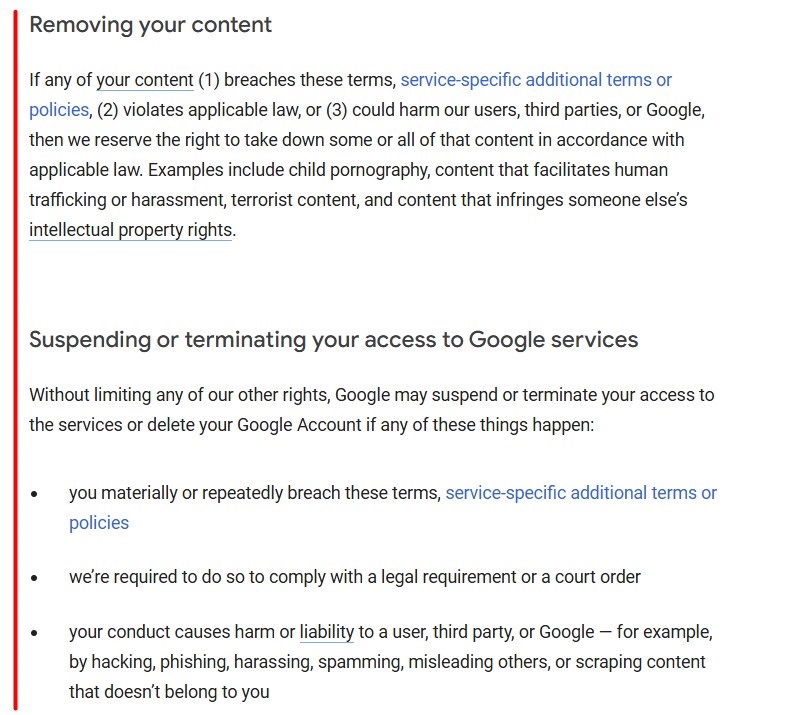

Google's Terms of Service lets users know that it will remove content that breaches its terms, is illegal, or could harm it or other users or third parties. It lists examples of content it will remove, including child pornography, content that enables human trafficking, and content that violates another person's intellectual property rights:

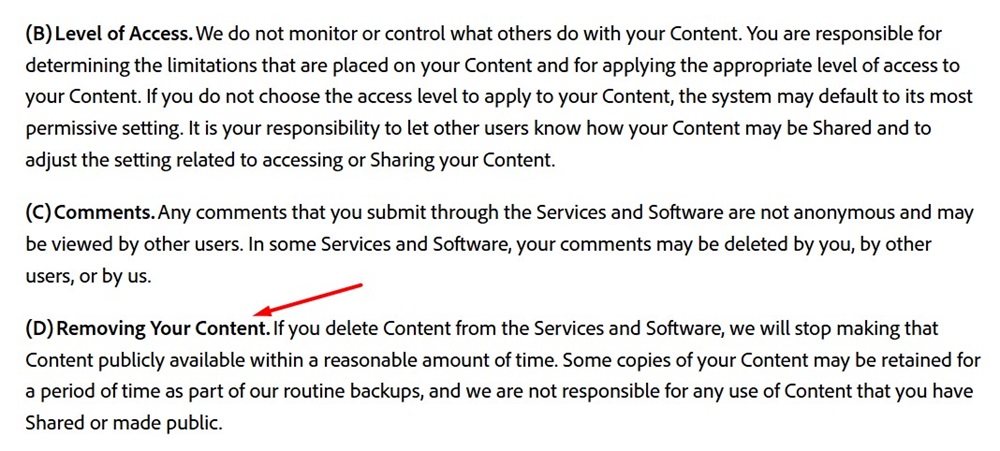

Adobe's Terms of Use explains that if a user decides to remove their content, it will stop making the content public:

Where Do You Display a Terms and Conditions Agreement for User-Generated Content?

After you draft your Terms and Conditions agreement to include relevant information about how user-generated content is handled, make sure you display a link to your agreement in places where users can find it easily at all times.

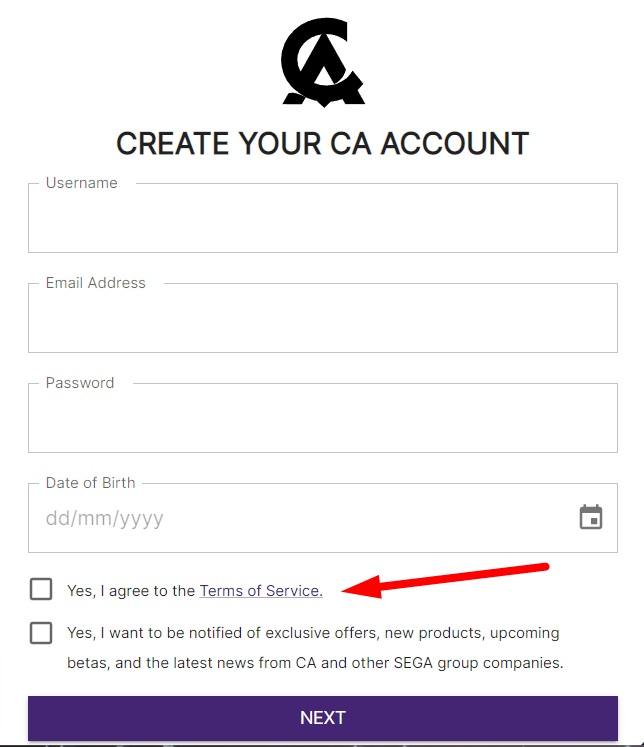

First, present the link at the point where users sign up for an account where they can submit user-generated content.

Here's an example of this:

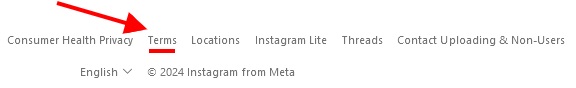

In addition to this, include a link to your Terms agreement in your site's footer or in an in-app menu. This lets users access the agreement at any time either before or after signing up.

Here's an example from Instagram:

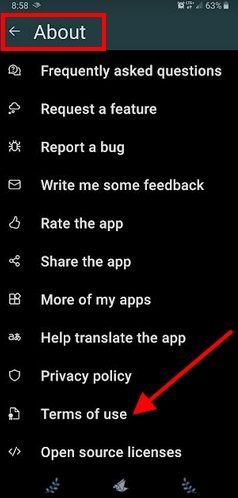

And here's an example of an in-app About menu that has a Terms agreement accessible with just a few taps:

How Do You Get Users to Agree to a Terms and Conditions Agreement for User-Generated Content?

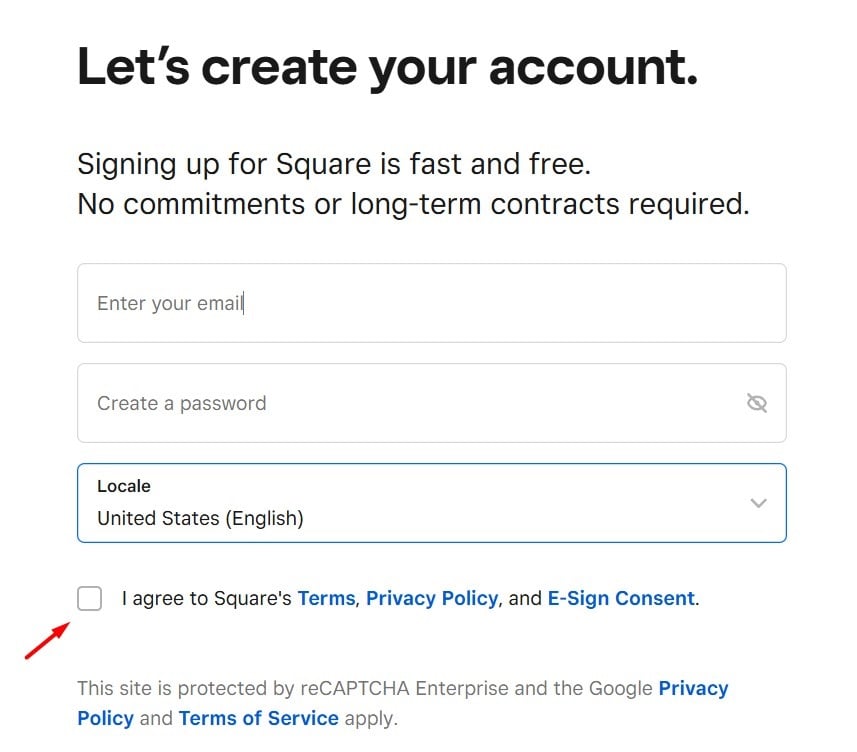

To help make your Terms and Conditions agreement legally enforceable, you can use an "I Agree" type of checkbox. This is a checkbox located next to a statement saying that users must agree to your Terms and Conditions agreement in order to use your services.

Users must tick the checkbox before taking certain actions, such as signing up for an account or making a purchase.

Before creating an account, users must first click a checkbox indicating that they agree to Square's Terms and Conditions agreement, Privacy Policy, and E-Sign Consent document:

Summary

A Terms and Conditions agreement is a legal document that outlines the rules that users must follow in order to use your products, services, websites, or apps.

User-generated content is content that users create and upload to a website, app, or online platform.

User-generated content can be uploaded in text, image, video, or audio format. It can include social media posts and comments, comments on articles or blog posts, product reviews, and video and audio uploads.

If you allow users to upload or share their own content on your website or platform, you should use your Terms and Conditions agreement to address user-generated content.

The user-generated content clauses in your Terms and Conditions agreement can include the following:

- Who owns user-generated content

- Prohibited user-generated content

- Authorized uses for user-generated content

- How user-generated content can be removed

Make sure to display your Terms agreement somewhere where users can find it both before and after submitting any user-generated content, and have them give consent to be bound by the Terms you set forth.

The first step to compliance: A Privacy Policy.

Stay compliant with our agreements, policies, and consent banners — everything you need, all in one place.